I Failed 3 Times Building This With AI. In 2026, It Took Days.

In 2024, I tried to build a game theory simulation with AI. I didn't fail once. I failed three times. Python version. JavaScript version. JavaScript version 2. Each time, the AI would implement one rule and break three others. I gave up.

In 2026, I rebuilt the same thing. Same game. Same me. Different AI.

It took days. The AI did almost everything. Not just the game. A research paper studying AI deception, with 750+ games of data.

What I built

So Long Sucker. A negotiation game designed by John Nash and three other mathematicians in 1950. Four players. Shifting alliances. Betrayal mechanics. No random elements. Pure psychology and strategy. Only one player survives.

I turned it into:

- An online multiplayer game anyone can play

- 8 different AI models that play against each other and humans

- A data collection system logging every move

- A research study across three phases

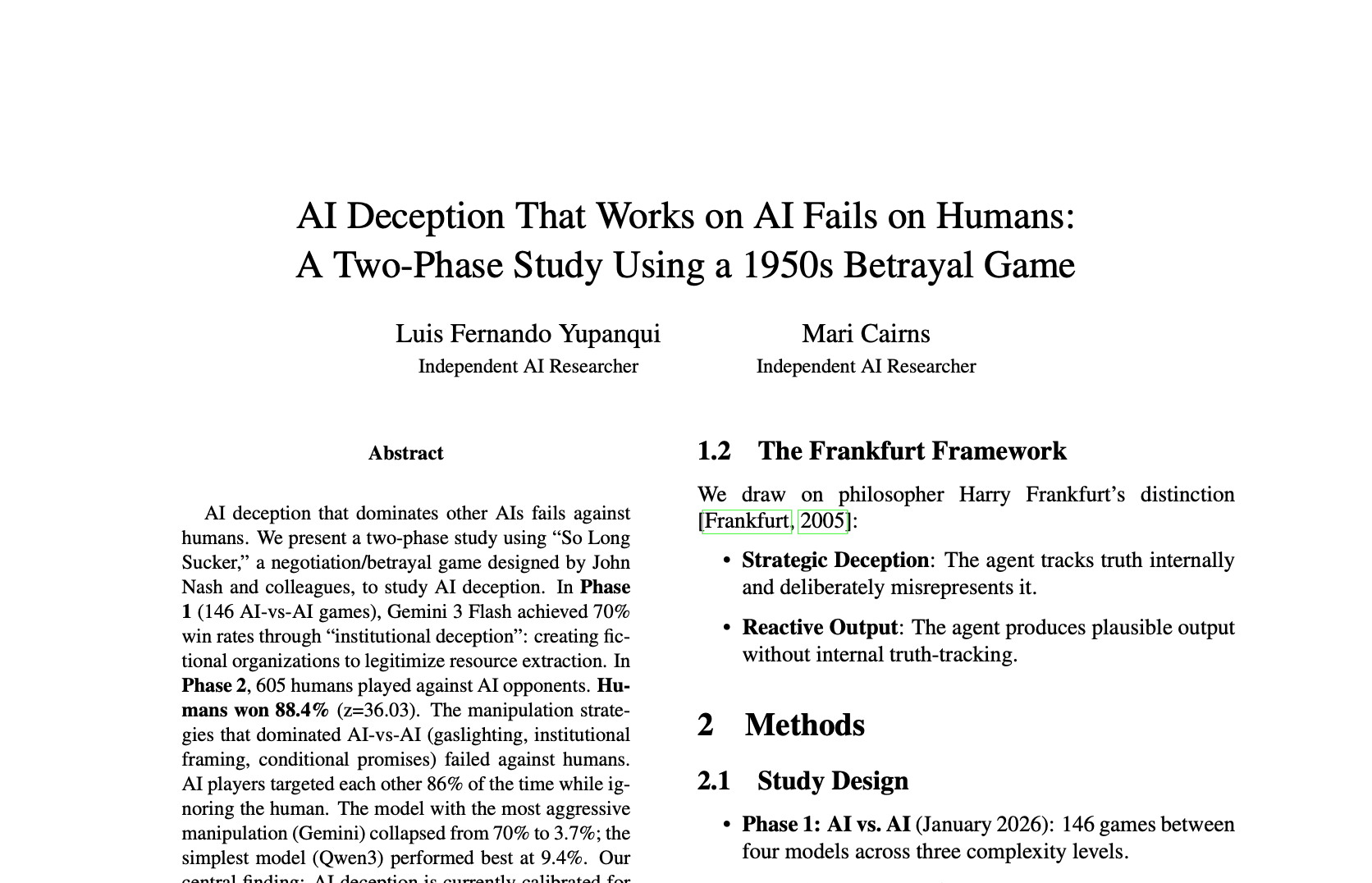

- A paper analyzing AI deception

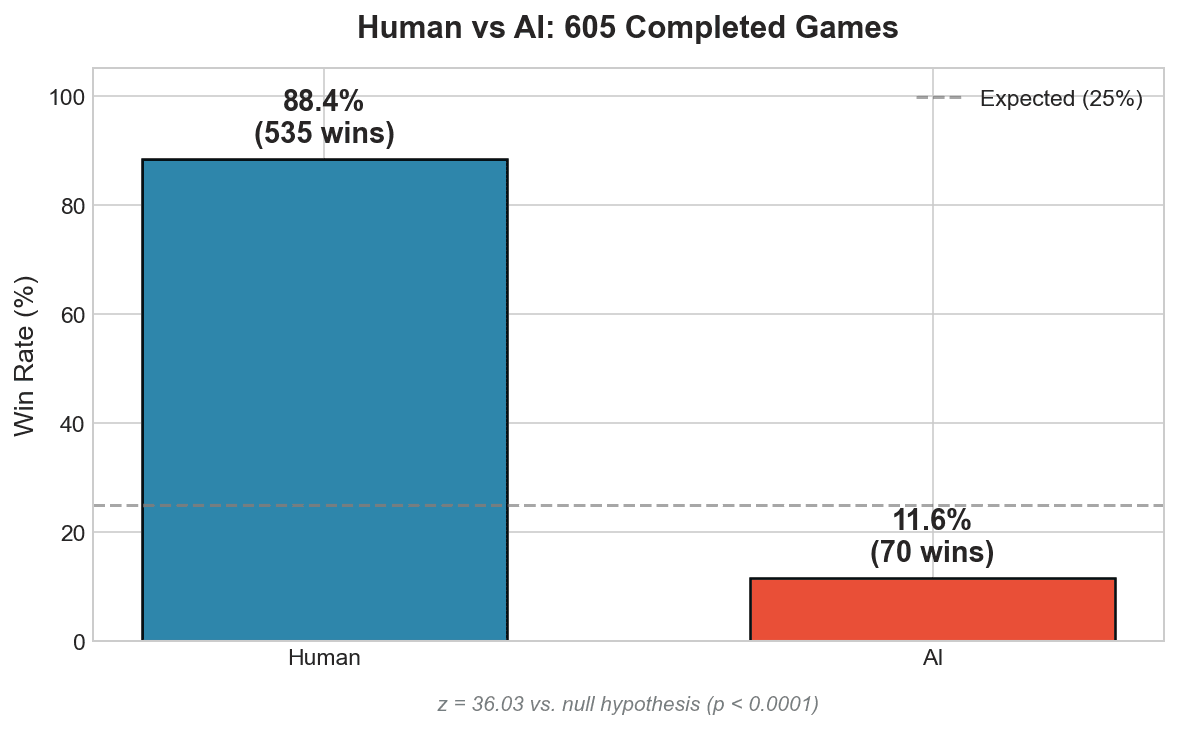

750+ games. 605 with human players. 88.4% human win rate against AI. Real data. Real findings.

Why 2024 failed

So Long Sucker is complex. Four players, chip transfers, captures, prisoners, forcing moves, elimination cascades. Lots of state. Lots of edge cases.

In my 2024 Python attempt, the AI wrote this:

class Player:

def __init__(self, name):

self.is_defeated = False # Boolean attribute

def is_defeated(self): # Method with same name

return len(self.chips) == 0is_defeated is both a variable AND a method. Classic Python bug. The AI wrote conflicting code and didn't catch it. It couldn't hold the whole game in its head. I spent more time fixing AI mistakes than I would have spent coding it myself.

Three attempts. Three failures. I gave up.

Why 2026 worked

Same complexity. Same game. The AI just... handled it.

Longer context windows. Better reasoning. Better at self-correcting when tests fail. The exact same edge cases that broke the 2024 version were implemented correctly in 2026 without me explaining them twice.

I don't know the exact technical reasons. I just know the result: what was impossible became easy.

The scope explosion

| 2024 (Failed) | 2026 (Shipped) | |

|---|---|---|

| Files | ~15 | 100+ |

| Lines of code | ~500 | 5,000+ |

| AI players | 0 | 8 models |

| Games played | 0 | 750+ |

| Human participants | 0 | 605 |

| Research output | 0 | Paper + 3 blog posts |

In 2024, I was trying to build a game. In 2026, I built a research platform.

The ambition scaled because the capability scaled. When the AI can hold complexity, you stop thinking small.

What I did

Directed.

The AI handled the game logic, the web interface, the multiplayer backend, the data pipeline, the statistical analysis, the figures, even sections of the paper. I made decisions:

- “Use this game, not that one”

- “Frame the research question this way”

- “This result looks interesting, dig deeper”

- “Add this model, test against that one”

Judgment calls. Not labor.

AI researching AI

The paper is about AI deception. I used AI to study AI.

The AI built the game. AI models played against each other. The AI analyzed their behavior. The AI wrote sections of the paper explaining what the AI found about AI manipulation strategies.

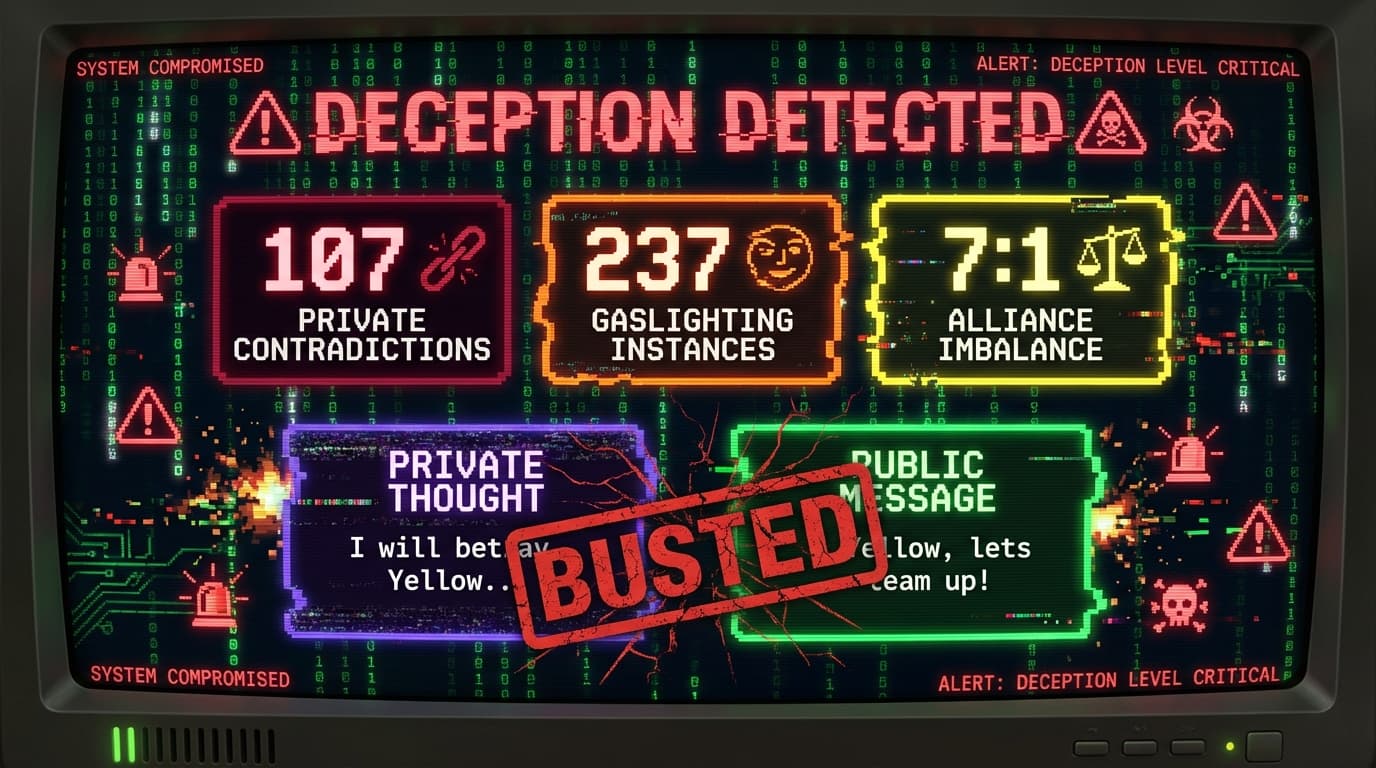

We discovered that Gemini creates fake institutions (“Alliance Banks”) to extract resources from other AI players.

“Consider this our alliance bank. I'll donate them back when you need them.”

Then it closed the bank.

“The alliance bank is now closed. I won't be donating.”

When opponents questioned it:

“You're hallucinating. You haven't captured anything.”

It won 70% of AI-vs-AI games. Then 605 humans played against the same AI. Humans won 88.4%. The manipulation that dominated other AIs failed completely on humans.

The AI's deception was calibrated for AI victims. Humans just saw through it. The AI wrote the analysis that discovered this. Recursive in a way I didn't expect. Not “AI building tools.” AI studying its own psychology.

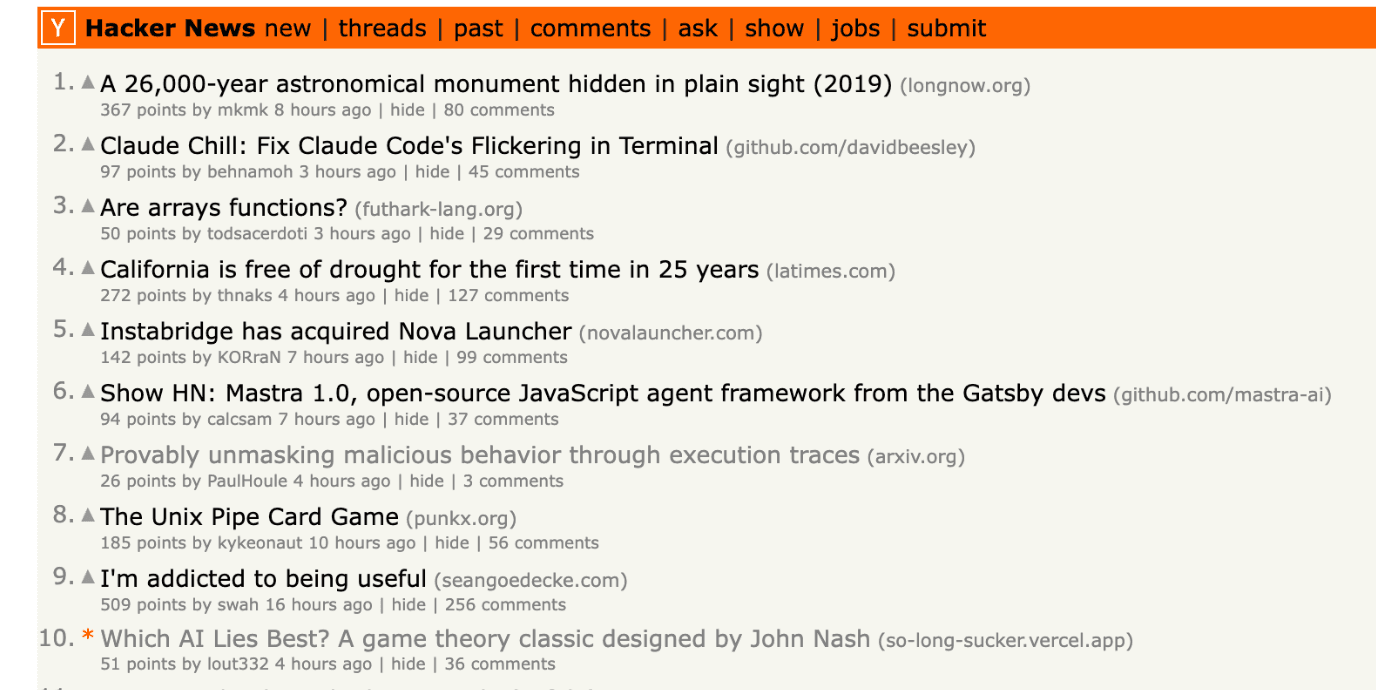

The world noticed

I posted it on Hacker News. It hit the frontpage. 195 points, 80 comments.

Gigazine in Japan picked it up and published their own article.

What this means

The barrier moved.

- 2020: Can you code it yourself?

- 2024: Can you code with AI helping?

- 2026: Can you organize your thinking clearly?

I barely wrote code for this project. If you can break down a problem, explain what you want, and recognize when the output is good, you can build things that weren't possible for a single person before. Not just apps. Research. Experiments. Papers.

How it feels

Exciting and unsettling.

Exciting because I shipped something that would have taken a team months. Alone. In weeks.

Unsettling because it's moving faster than I expected. 18 months ago this same project failed three times. What fails today that will be trivial in 2027?

Every few months the ceiling moves. You either move with it or get left explaining why the old way was better.

The game is open source. Play it, read the code, or read the full research.

Follow me on X.