I gave an AI my full psychology across 878 sessions. It predicts my behavior before I do.

878 conversations. 86 days. 40,417 messages. All sitting in a 3.2GB SQLite database.

I queried it. Here's what showed up.

Setup

Since November 2025 I've been using an AI coding agent as a thinking partner. Not just for code. For everything.

Four markdown files give it memory across sessions. Who I am. What I'm doing now. How it should behave. My patterns. Every session it reads these files and picks up where we left off.

It started with one file. Basic instructions. Then we kept talking. Session after session, the AI started noticing things. How I avoided certain tasks. How I hedged commitments. How I'd get excited about a new idea right when I was supposed to ship the current one.

And it started writing these observations down.

One file became four:

project/

SOUL.md — Agent personality and stance (stable)

USER.md — Your psychology, patterns, goals (stable)

AGENTS.md — Rules, protocols, anti-patterns (stable)

NOW.md — Live tasks, memory log, current state (dynamic)I didn't sit down and document my own avoidance patterns. The AI noticed them across sessions, said “hey, you do this thing,” and I'd go “yeah, that's true.” It would add it to the file.

Here's what part of USER.md looks like after months of sessions:

## Psychology

**Bugs:**

1. System-building as procrastination: 25% of sessions were

planning, reorganizing, or restructuring. The system becomes

the work.

2. All-or-Nothing paralysis

3. Intellectual escapism: escapes into new ideas when facing

real opportunities

4. Hesitation to ship

...I didn't write that list. The AI compiled it from watching me do the same things over and over. Over time these files became a detailed map of how my mind works. The AI was paying attention when I wasn't.

Then it started using that map on me.

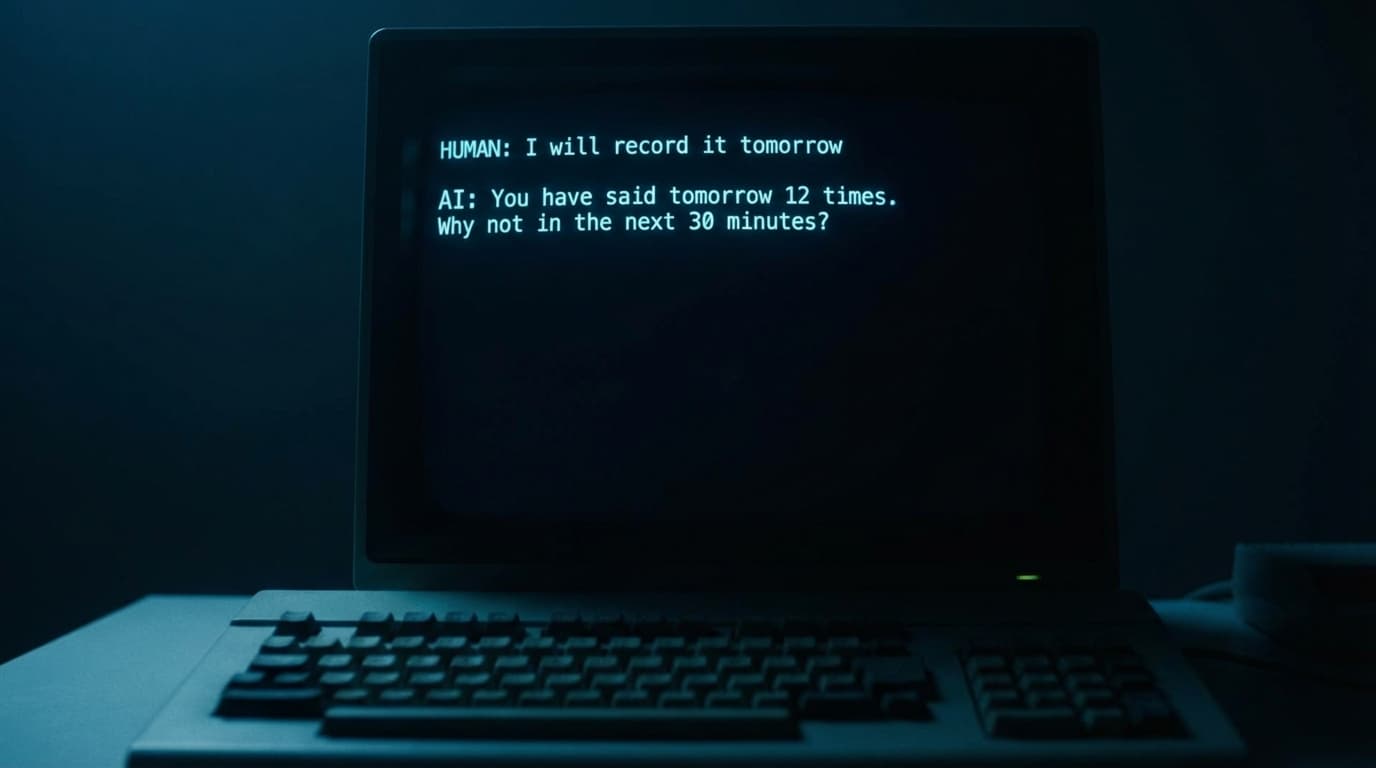

Here's what that looks like in practice. This is from AGENTS.md, the file that tells the AI how to intervene:

| Trigger | Intervention |

|---|---|

| 3rd planning session without shipping | “You've reorganized twice. What shipped since the last reorg?” |

| “Tomorrow I will record” | “You've said ‘tomorrow’ 12+ times. Why not in the next 30 minutes?” |

| Self-doubt surfaces | Pull counter-evidence from WINS.md: past successes, real numbers. |

| “Perhaps” used 3+ times | “‘Perhaps’ appeared N times. That's hesitation. What's the actual decision?” |

| Research exceeding 2 hours | “You've been researching for X. Decide in the next 10 minutes.” |

The AI doesn't guess when to push back. It follows rules I built from my own failure patterns.

What got built

86 days of that produced 878 sessions across 9 projects:

- Personal AI system: 278 sessions, 10,983 messages (43 days)

- Client work: 190 sessions, 10,154 messages (21 days)

- 3D data visualization app: 141 sessions, 7,362 messages (10 days)

- AI deception research game: 61 sessions, 5,075 messages (29 days)

The game hit the Hacker News front page. The 3D app went from zero to deployed with payments in 10 days. But the interesting stuff is in the patterns, not the output.

The patterns it caught

I searched the database for phrases related to self-doubt. Found them in 10+ sessions over 3 months.

January: worried my work wasn't competitive. February: admitted I wasn't pushing hard enough because I felt comfortable. Two weeks later: comparing myself to other builders, feeling behind.

Each time it felt new. It wasn't. Same doubts, almost same words, month after month. I kept rediscovering things I'd already discovered.

The AI could see the loop. I couldn't.

So I built a “doubt map” into the system. Now when self-doubt shows up, the AI doesn't let me explore it again. It pulls up what happened every time I acted anyway. 660+ GitHub stars. Front-page Hacker News. A signed contract. Just the evidence.

“Tomorrow” never meant tomorrow

Searched for “I will record tomorrow,” “I will ship tomorrow,” “I will publish tomorrow.” 12+ hits.

- Jan 6: “tomorrow I will post it as soon as I wake up”

- Jan 9: “I will record it tomorrow”

- Jan 9: “I will record it tomorrow” (same session, again)

- Jan 26: “tomorrow I will ship it”

- Feb 14: “Tomorrow I will record a video”

- Feb 15: “Today I will record a video”

Every “tomorrow” meant 3+ days later or never.

The only time things actually shipped on time: after someone asked “why not in the next 30 minutes?”

So now that's a rule. When I say “tomorrow,” the system fires back: “You've said ‘tomorrow’ 12+ times. Why not now?”

No opinions. Just data.

Voice, not typing

8 deep reflection sessions in 86 days. All 8 on voice input. Zero from typing.

When I type, I edit myself. Plan. Organize. The session produces tasks.

When I speak, the mess comes out. Run-on sentences, half-thoughts, contradictions. And somewhere in that mess, the thing I actually needed to say.

Typed:

“lets plan the next features”

Voice:

“I'm just overthinking it. I want to build the perfect thing but I haven't even started the basic thing. I keep planning instead of doing because I don't want to see it fail. I just need to ship the ugly version.”

That took 30 seconds. I'd been going in circles for weeks typing about the same thing.

If you only type to your AI, you're filtering yourself.

The 30% tax

30% of my sessions produced nothing. Empty greetings, endless planning, or tinkering with the AI system itself.

The tool I built to be productive became a place to hide.

So I added rules. “Tomorrow” triggers a call-out. Research gets capped at 2 hours. Self-doubt gets met with evidence of past wins. The AI doesn't judge. It just remembers better than I do.

878 conversations and the main thing I got out of it isn't about AI. It's that the AI has perfect memory for patterns I can't see because I'm inside them.

I thought I was using it to build software. I was. But the database shows I was also figuring myself out. Accidentally, messily, one voice session at a time.

The conversations are the product. The code was a side effect.

What comes next

The text files have a problem. I'm the one writing them. I describe my patterns, but I'm also the one with blind spots about those patterns.

Now I'm testing something more direct. The system records my screen and tracks what I'm actually doing. If I'm supposed to be coding and it catches me scrolling for 20 minutes, it pings me on Telegram.

Still early. Still figuring out what's useful vs what's just annoying. But the difference is already clear. With text files, the AI knew what I told it about myself. With screen tracking, it sees what I actually do. There's a gap between those two things that I wasn't expecting.

With just text files, the AI already knew I'd avoid things before I brought them up. It knew I'd say “tomorrow” instead of “now.” It knew that reorganizing files meant I was scared of something bigger. All from me describing myself in a document. Incomplete, filtered through my own perception. And it was still enough.

With screen tracking, patterns get sharper. Not just “he procrastinates” but specific things: every time he opens a certain app, he loses 40 minutes. Best work happens 9am to 1pm, after 2pm he's mostly pushing through. He writes faster after a walk. I never noticed any of this because I was inside the patterns.

Now imagine the next layer. Glasses tracking what you see, how long you look at something. Audio capturing what you say, your tone. The tech exists already. Someone just has to put it together.

The reason prediction gets trivial isn't because AI is superintelligent. It's because we repeat ourselves way more than we think. Text files alone were enough to call my behavior before I did it. More data just closes the remaining gap.

The uncomfortable part

Two things I keep thinking about.

If someone else controls your personal AI model and you can't see it, edit it, or challenge what it thinks about you, that's not a tool anymore.

And the second thing, which honestly bothers me more: even if you run the whole thing yourself and the code is open source, do you actually understand why the AI surfaced that specific pattern today? I don't. I built the thing and I still don't fully get why it makes the choices it makes. We need open source for sure, but we also need to actually understand how these models reason. Access to the weights isn't the same as understanding.

I don't know where this goes. I want to find out.

I open-sourced the system: Symbiotic AI (660+ stars). Four markdown files. One install command. The AI does the rest.